-

Hello There!

Welcome to AM Coffee time, A publishing website for my personal blogs made for MCO427

This Website, will contain personal toughts about misinformation and research on how and what is being misconstrueded in our media

24-Hour Media Diet: Spotting Misinformation

For this blog, we are to create a 24-hour diary. This task consist on the social content I consume, emphasizing any media content that seems taken out of proportion or misinterpreted. This will be a dive into me and my interaction with media.

Saturday 4/28/26

- 9:00 AM: Alarm goes off on my phone. This signals a long Saturday ahead for me. I snooze my alarm and get ready. Although some days I am guilty of scrolling Instagram first, it did not happen today.

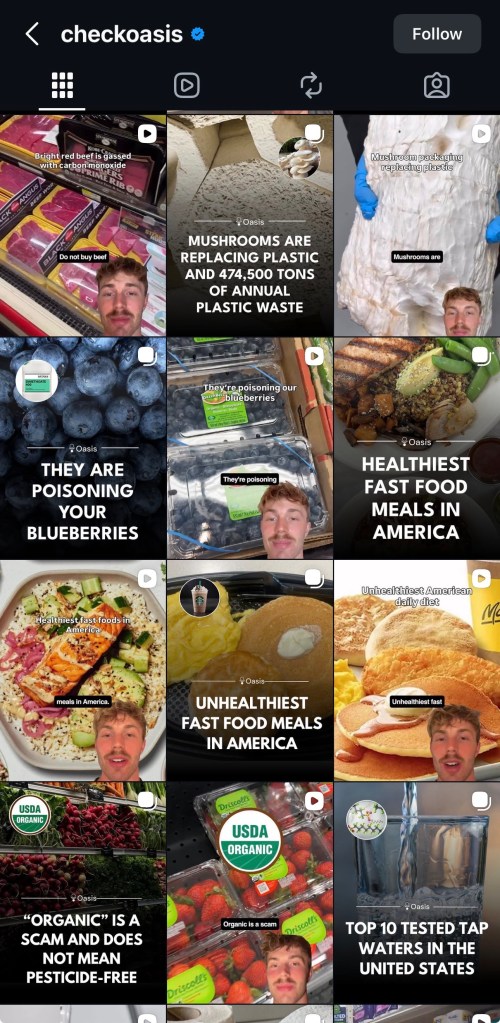

- 10:30AM: Arrived on campus to tackle my capstone collection. I check my phone as I rise in the elevator, check my messages, and play music(currently I have been looping LUX by Rosalia). Once I set up my workstation in class, I scroll through Instagram. On there, lots of friends post and fashion editorials welcome me to the platform. Example A, my feed.

- My Instagram feed, altough somewhat tailored based on accounts I follow, I have noticed a lot of random account’s post have been filling my feed. For example, the middle image above is from an account I do not follor nor do I have interacted with. The title it shows, with bold, eye catching letters usually means exaggerated content. So I usully scroll past these accounts as I have noticed they are being pushed through by the algorythim; Today is different so I decided to dive into these random post I have seen.

- 11:00 AM: By now, I have scrolled enough though Instagram and absorb all kinds of post. I decided to go back to the account that posted the image with the bold letters “Beef is gassed…” – @Checkoasis on Instagram. Example B, The account this image originates from.

Short anaylis of this accounts post and intent from my point of view:

A lot of what they post falls into the same type of content you usually see on Instagram “fact pages.” These pages often highlight bold claims or viral statements and then try to correct them, but they can sometimes oversimplify or leave out context like mentioned by PBS https://www.pbs.org/newshour/classroom/lesson-plans/2023/01/lesson-plan-how-to-fact-check-the-fact-pages-on-instagram?.com

When it comes to Instagram as a information outlet, I tend to scroll through media and only focus on the image of whats shown; For videos it will depend on what catches my insterest such as headlines im insterested, or caught curious about. I tend to take this app with a grain of salt as I am aware misinformation runs rampant on these media apps. Unfortunatly, alot of people who consume the same media as me arent as aware or arent as cautious about beliving headlines. Accounts such as @checkoasis, feed into two problems. Problem one is their images with bold claims, this type of post can quickly allow people to misinterpret information. I can foresee people reading headlines such as “Beef is gassed with carbon monoxide” and posting it as a comment on a diffrent post claiming it as their own factual thought. The issue being the lack of explanation being pushed by the image. Lack of good or bad statements that allow content consumers to understand the title withing the first secound of viewing (most times thats all it takes for someone to read something and belive it). This thought is explain into more detail by an article i read (found through reddit) by El Paìs news outlet. Explains how the lack of understnading/information funnels into becoming misinformation.

- 1:00 PM: By this time I decided to put my phone down, while playing Lux by Rosalia and continued working on my 5 fashion look colllection.

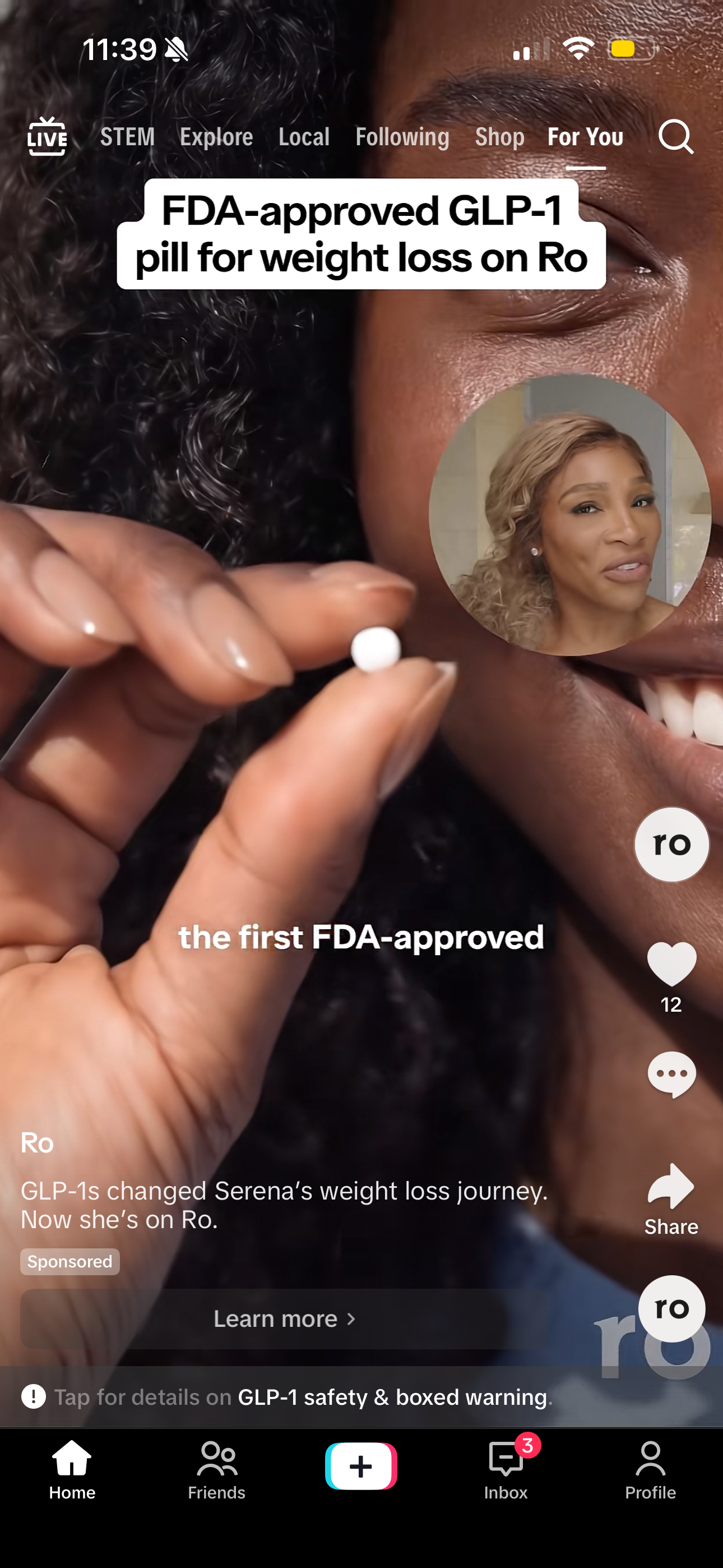

- 3:00 PM: I now have come to a point where I need a break from sewing therefore I sit and go back to scroling on my phone. This time I scroll through Tiktok as i want to digest video content, most of the time its memes and intertaining content from random creators (Pointing out that my Tiktok account is what i called a ghost account as I do not engage with content or have a profile that could be linked to me. I like to think this will keep my feed random. Most of the time it is) Example C. a couple of videos I scrolled through.

- 3:05: I noticed an Ad for GLP-1 by a company called Ro which I have been getting quite often. I noticed this ad has words like “affordable” “FDA approved” and “weight loss” which are key for their targetted customer. Altough I did not dive deeper into this ad due to my focus of passive scroling, the information the video contained seem very eye catching and could make me consider the product if I were looking to lose weight. A big issue with this Ad is the lack of information and the big push for the ideal results that advertisement always push which lead to misconstruded expectations in my opinion. I went back to scrooling for about 30 more minutes.

- 3:30PM: I resumed my sewing projects and continued listeing to music to keep my focused and entertained.

- 4:30PM: Decided to take a longer break and have lunch with my friend who is also sewing her collection along with me.

- 5:00PM: While having lunch, my friend scrolls through Tiktok and shares funny memes and videos she finds i might like, but nothing that is worth diving deep into.

- 6:00PM: My friend and I have returned to our long Saturday, sewing and patterning look 5/5 in my capstone collection.

- 9:00PM: By this time I have been home long enough to have time to scoll more on Tiktok. My feed is filled with memes and funny content that doest not portrait misconseption or pushes a negative narrative even if is for commedy.

Overall, after doing this 24-hour media diary, I realized how easy it is to just scroll through content without really questioning it. A lot of what I saw, especially on Instagram and TikTok, was quick and attention-grabbing, which makes it easy to take things at face value. Looking back at posts like the ones from @checkoasis, the bold claims stand out more. Even if something is technically true, the way it’s presented can still be misleading. For example, a headline like “beef is gassed with carbon monoxide” can easily be taken out of context, and I can see how someone might repeat that somewhere else thinking it’s a full fact. This assignment made me realize that I don’t always stop to think about where information is coming from, especially with content that feels normal or fits into what I already see every day. There’s definitely a mix of reliable and questionable content, and it’s not always obvious which is which. Overall, it just made me more aware of my habits and how important it is to actually think about the content instead of just scrolling past it.

(Blog 2) Evaluating misinformation education tools

For this Blog, I dive into tools that teach and reinforce spotting misinformation through a clear understanding of how it starts, what and how it’s enabled, and the level of widespread it reaches.

Rumor Guard: Fact checker

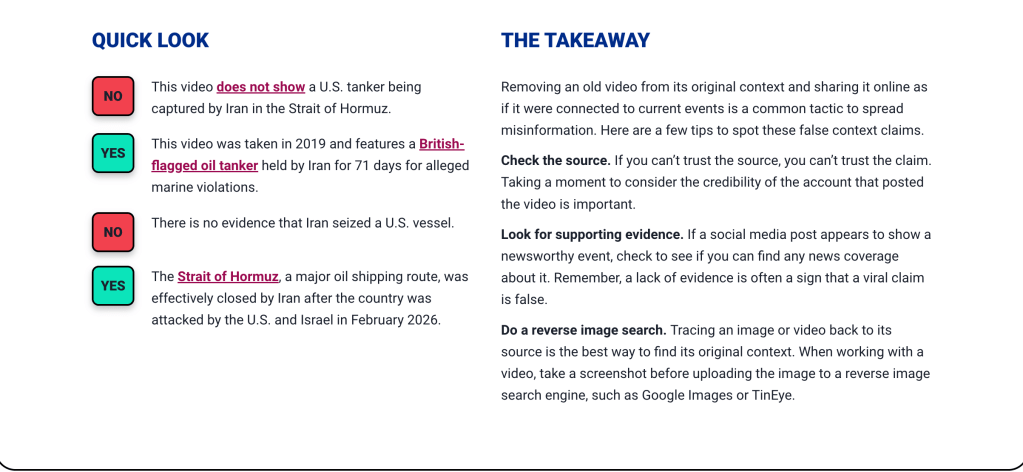

Using RumorGuard from the News Literacy Project felt more realistic to how I actually experience information online. Unlike the game, this was more about slowing down and analyzing real posts that have already spread.The most interesting aspect about the examples above is that in each of them, there is a systematic approach taken to analyze the source, evidence, context, and reasons. This led me to think of how I never really undertake such a comprehensive analysis whenever I scroll through my newsfeed.

One of the things that I realized was that misinformation does not necessarily have to be completely false; it can simply lack contextual information or be presented in a distorted way. RumorGuard provides an analysis of actual viral pieces of misinformation and the reasons behind their being misleading. According to the News Literacy Project, the platform is designed to help people evaluate claims using five credibility factors and apply those skills to other content they see.

This also relates to our class readings about the factors that cause misinformation to stick through repetition and confirmation bias. After seeing various postings, I realized the recurring themes within these posts. All in all, I believe that RumorGuard is an efficient program because it teaches one to be critical in practical instances. The program is not only about understanding the concept of misinformation but also about breaking down the process, step-by-step.

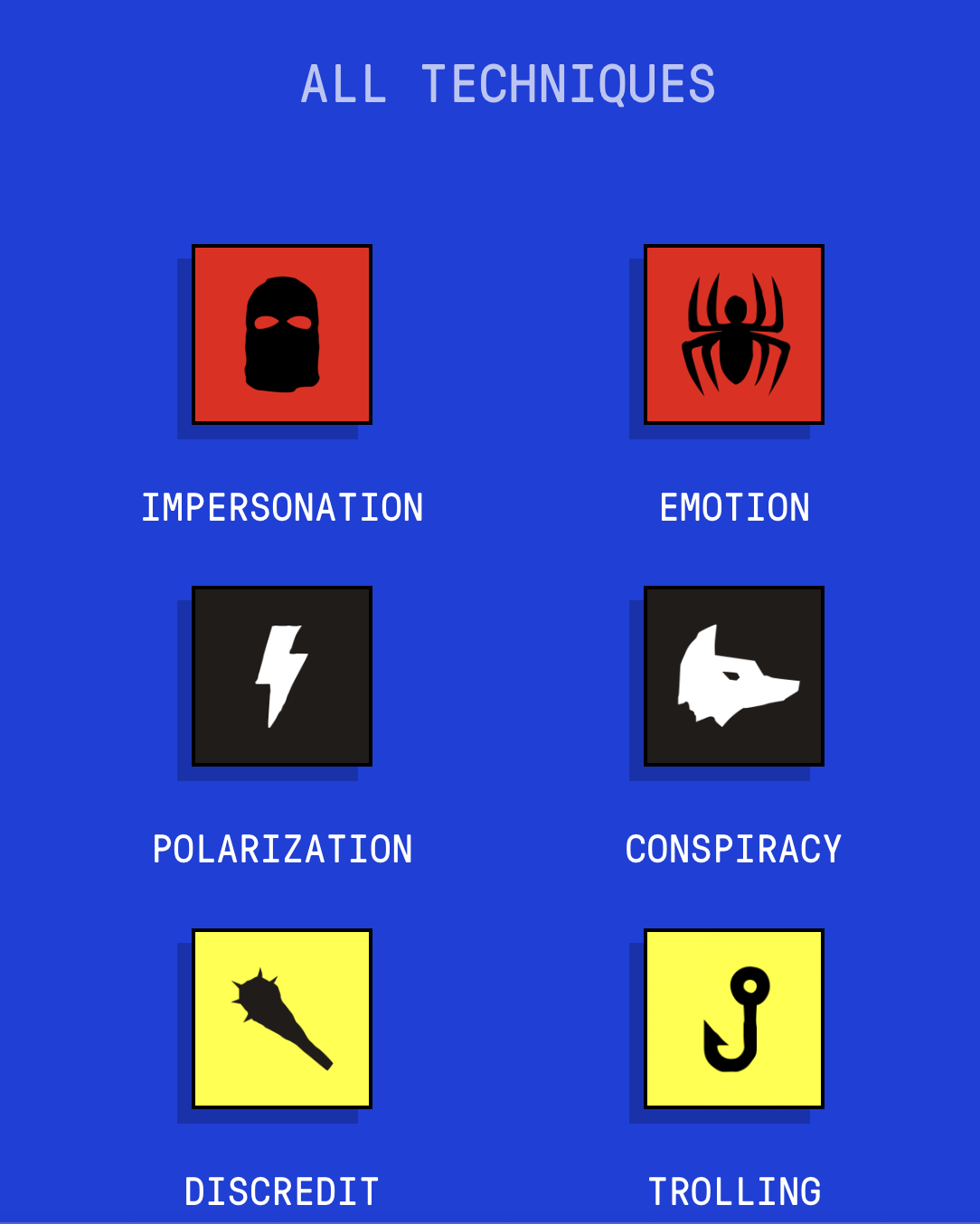

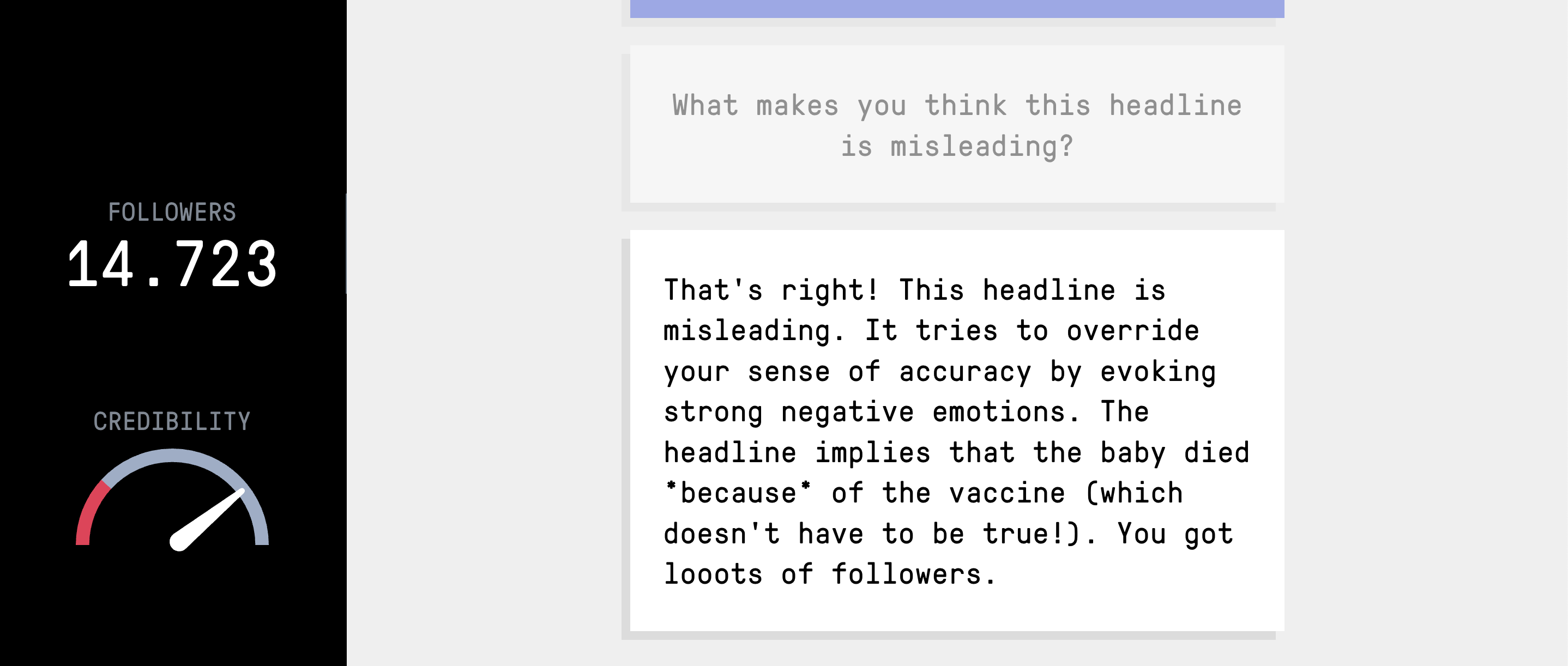

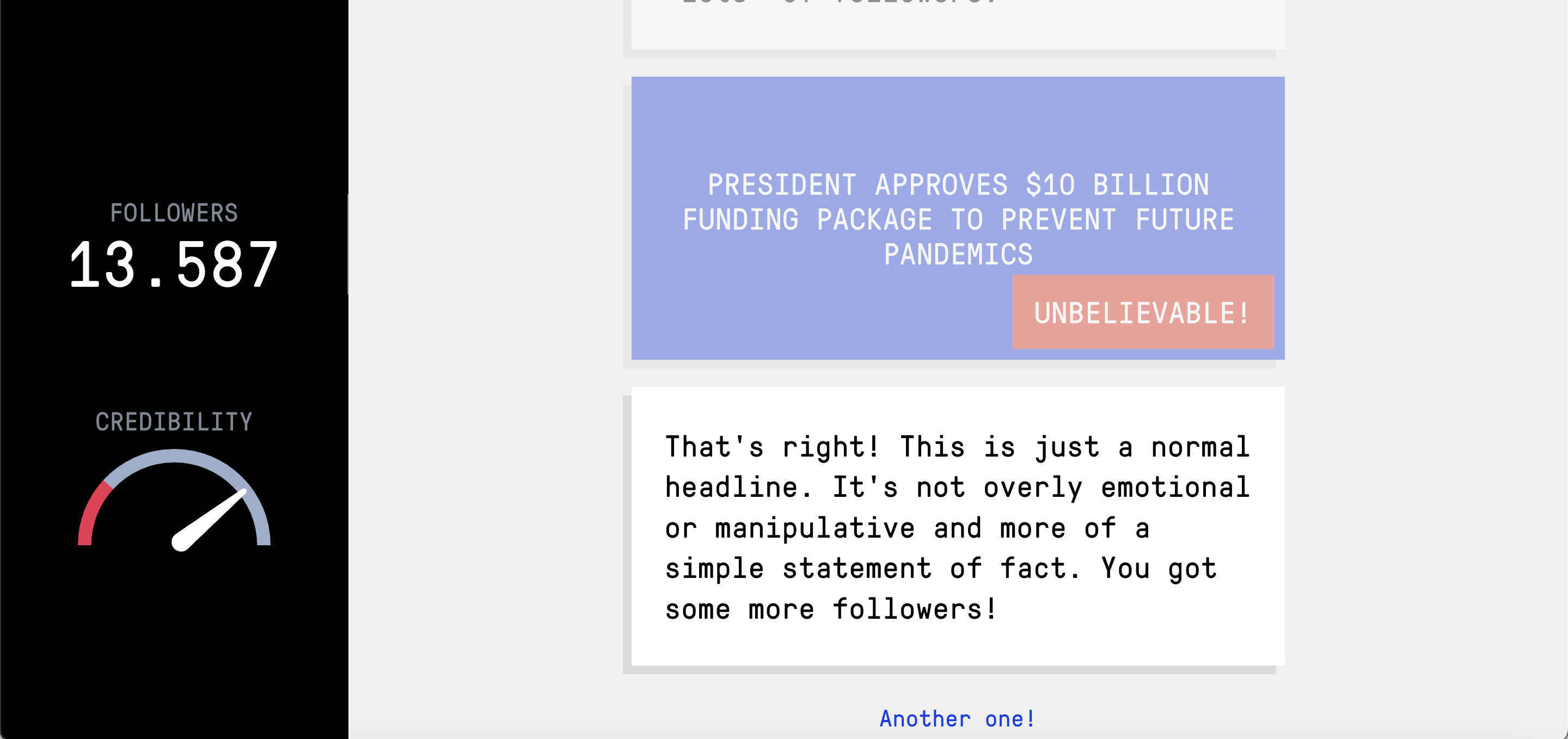

Bad News: Game simulation

Bad News opened my eyes to how much misinformation is planned and thought out. While at first I perceived the game as just that, a game, the more I progressed the more I noticed how effective it can be to grab someone’s attention when resorting to such measures as impersonation and emotionally charged statements. The one part that caught my attention in particular was impersonation, since it showed me just how quickly credibility could be built while not necessarily being credible. This is linked to our discussion on the tendency to base our judgements on impressions rather than verification.

However, what impressed me most is the connection between emotion and polarization. Through the process, players are encouraged to employ such emotions as fear and anger in order to generate reactions, which can be linked to the material on “Why do our brains believe lies?” published in The Washington Post about how people are inclined to believe certain information based on emotional response. Moreover, it is also associated with the findings presented by Toomey in his study in Biological Conservation.

The use of conspiracy and discrediting tactics also connects to the reading by A. R. Landrum, which explains how conspiracy thinking makes people more vulnerable to misinformation. I believe that this game works well since, rather than being taught from a book, you can feel and understand the strategies used in the process. They helped me become more alert to the ease of spreading information on social media platforms.

(Blog 3) Website or claim analysis

In this blog, I will dive into the research process I use to look into baseless claims. The reason behind this is that whenever we create a baseless claim, we are forming a narrative that is most likely false, for the purpose of entertaining people and feeding their need for argument. From our classroom discussion, I found out that misinformation can spread regardless of there being no evidence for any information.

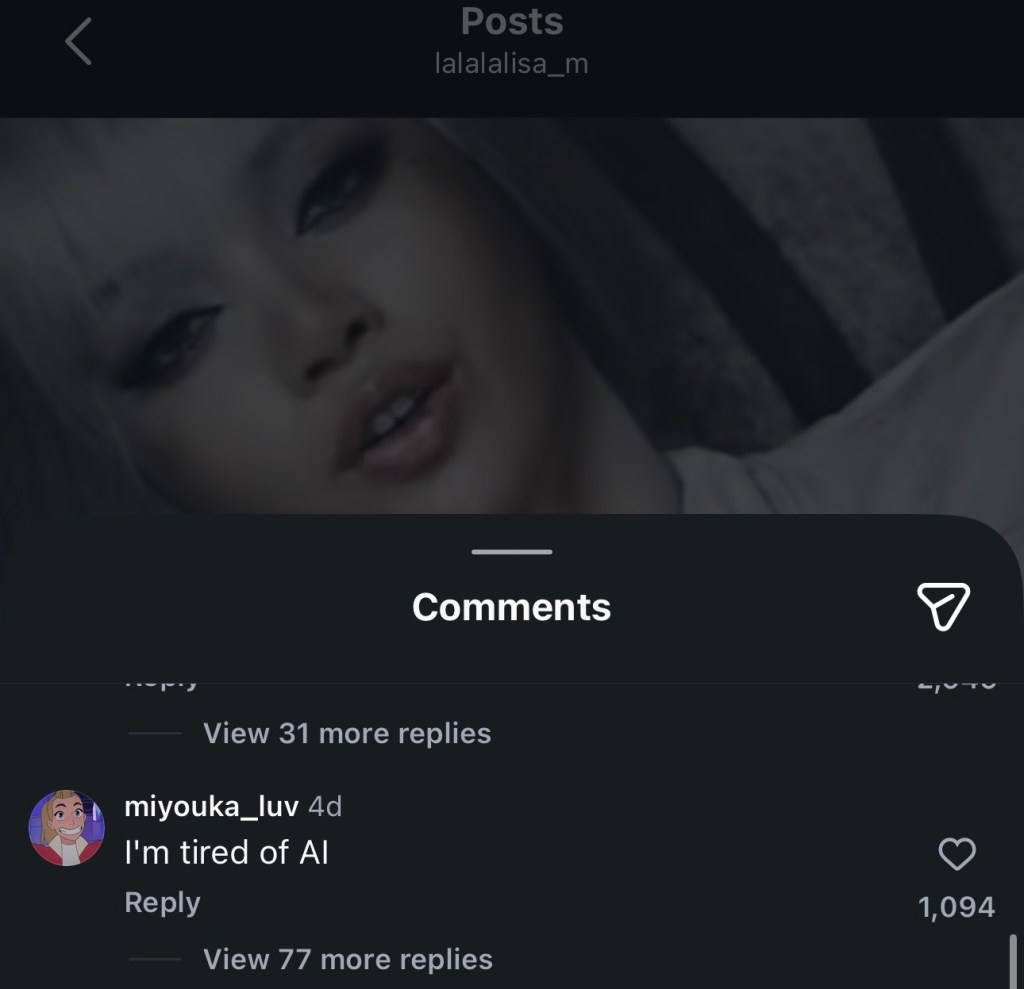

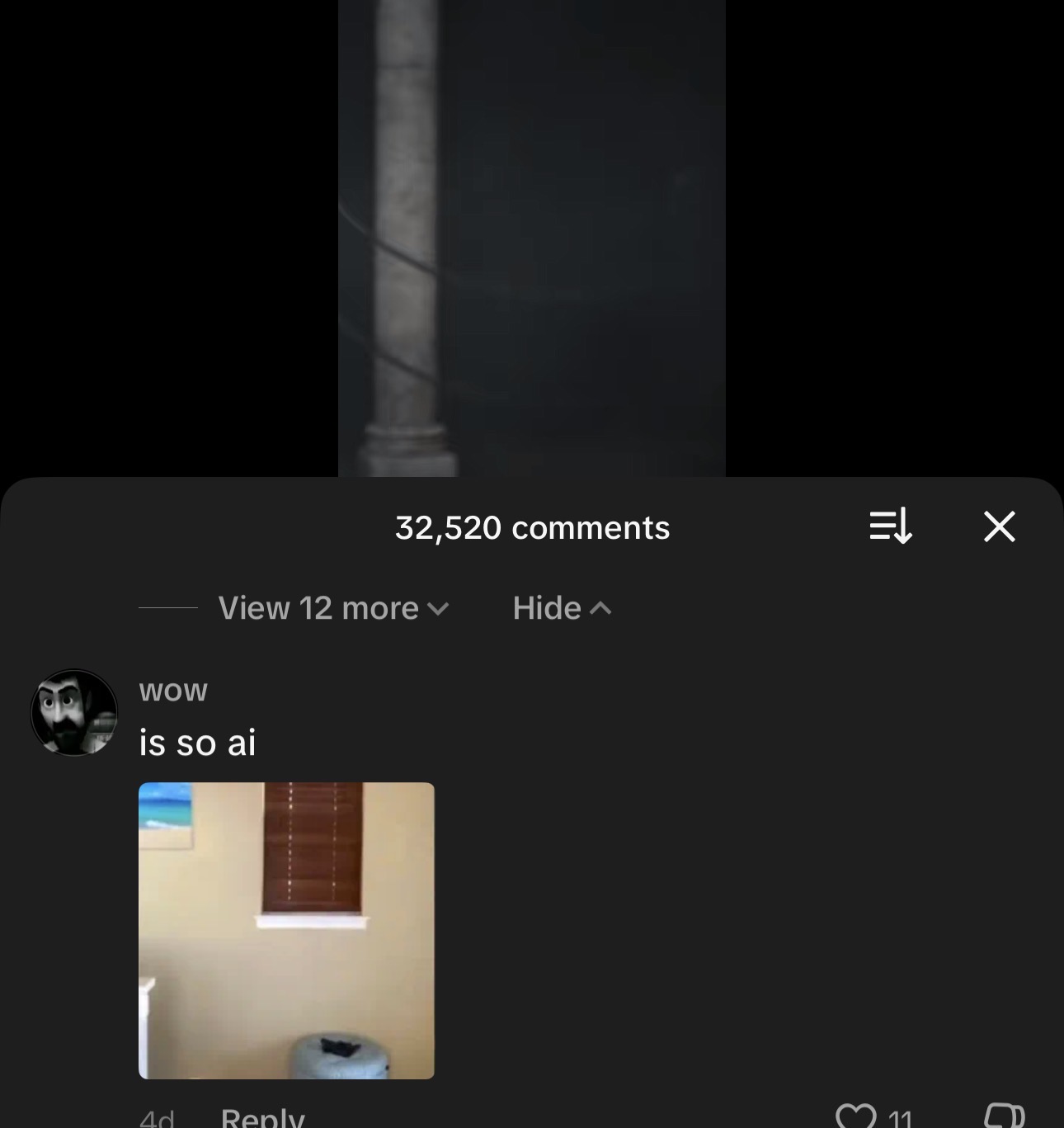

This week, more precisely, on April 8, 2026, the electronic dance music (EDM) artist Anyma collaborated with Lisa to release a song named “Bad Angel.” In short, the video uses some impressive graphics like virtual reality and CGI environments. So what makes this information relevant to me? As a social media consumer, I have witnessed people using arguments/claims of Ai usage in work that is well developed due to lack of knowdledge or simply to discredit the origin of the content, which as a creative myself I find degrading.

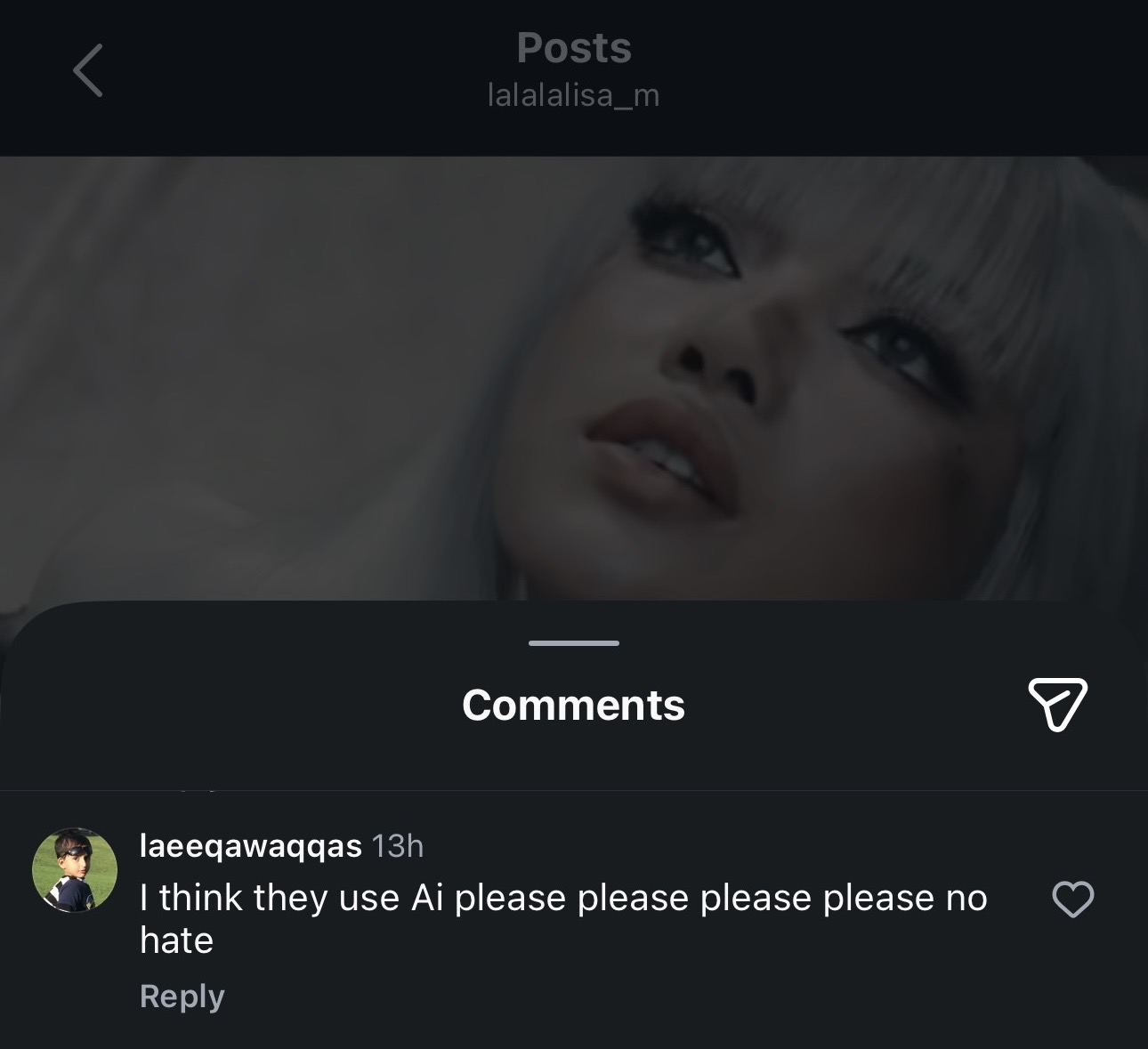

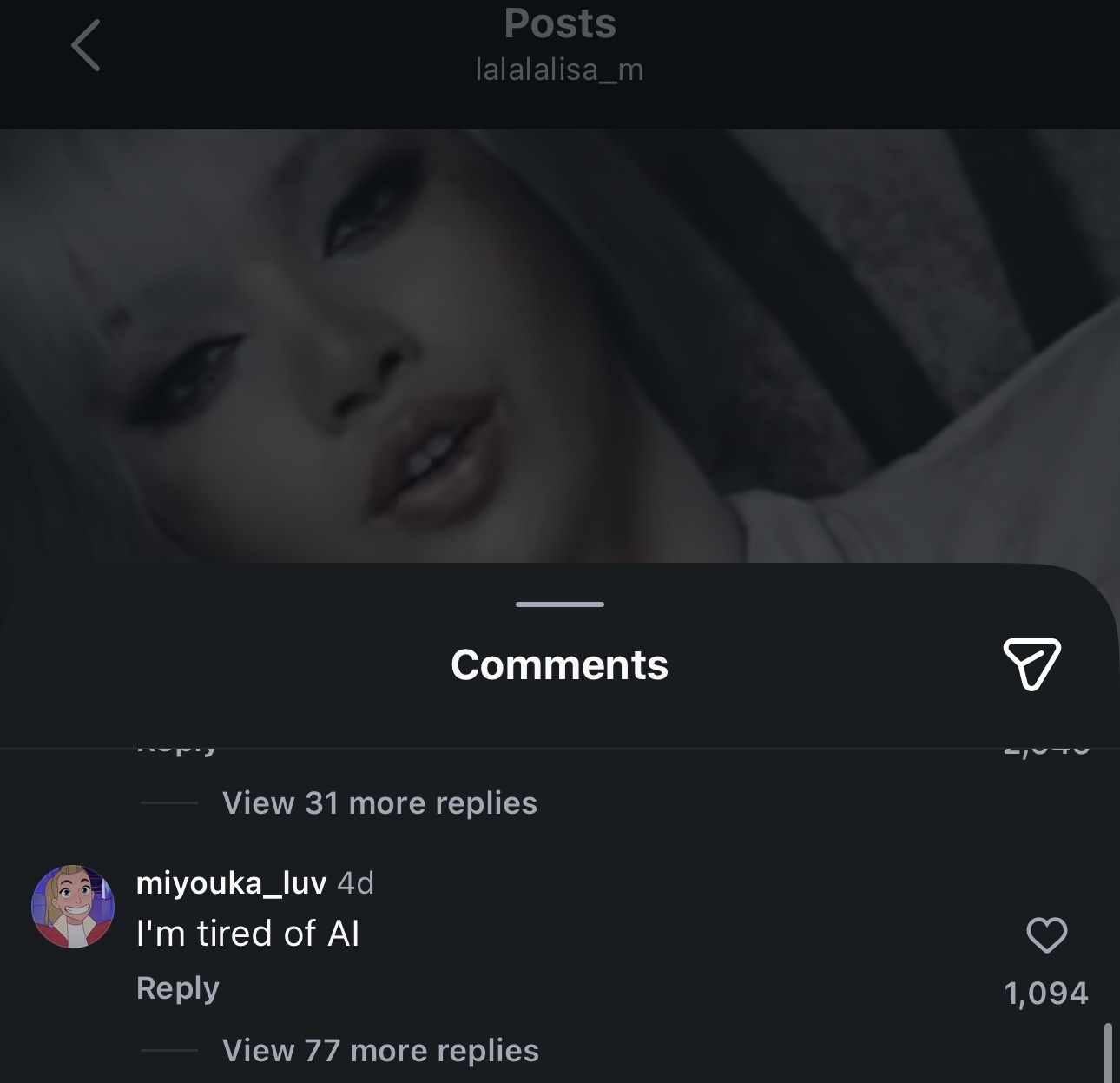

After a quick scroll on Instagram, I encountered comments claiming the video was created using AI technology. This claim was just that; a claim that was not followed up with any other information or evidence as to how the user came up with that conclusion. The reason it matters is that this claim discredits a group of creatives by suggesting AI had a major role in creating this content without proof.

I have been intrigued by this claim, and I will now walk through the steps I took when researching it.

First: Looking Across Platforms

I decided to look into the comments from multiple social media sites. This all started on Instagram through the comment section on Lisa’s page. From there, I moved to TikTok. The reason behind these choices is that TikTok tends to spread videos at a very high speed, often reaching millions within minutes, making it a strong platform for both trends and misinformation.

Second: Identifying the Claims

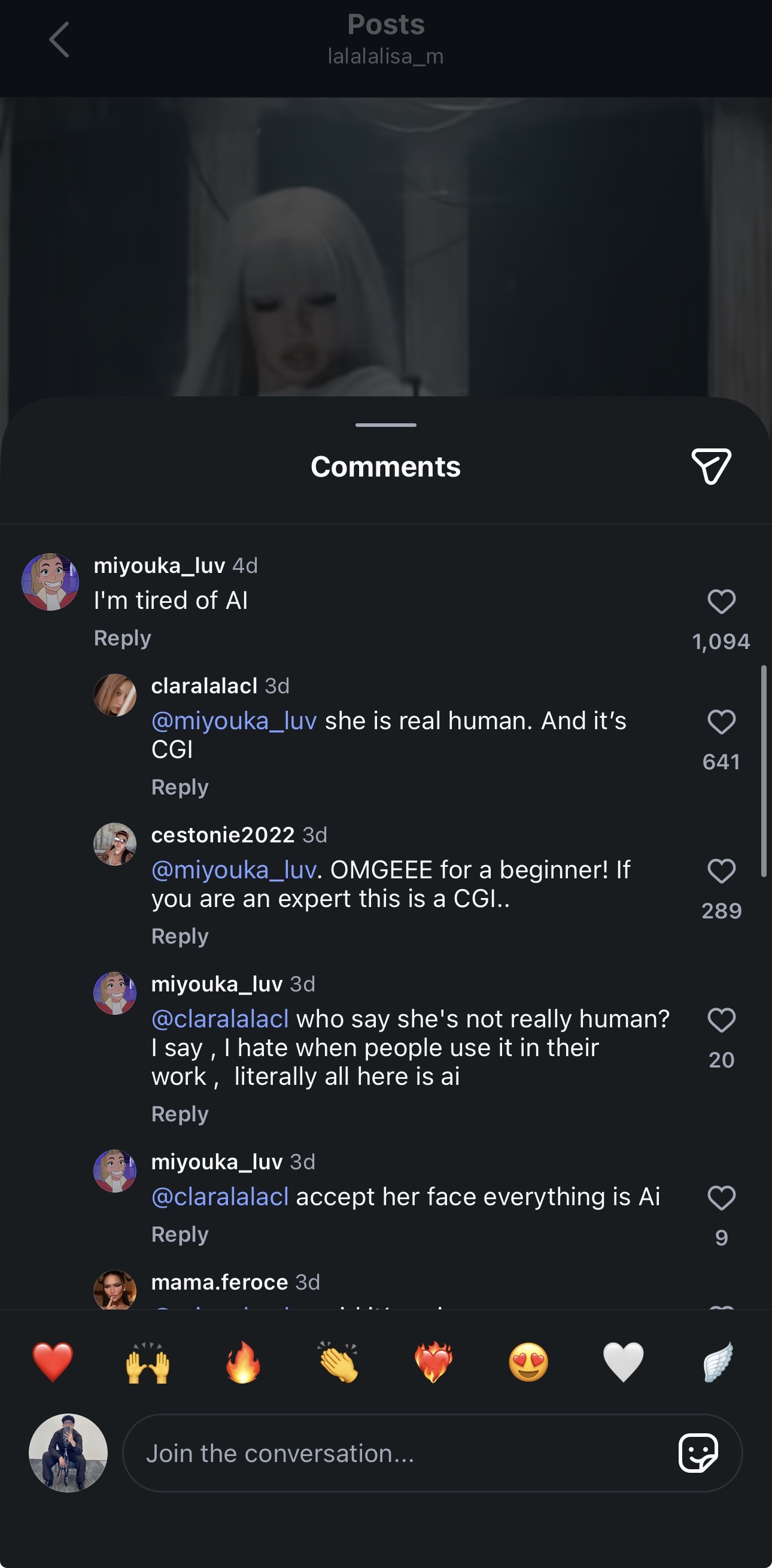

Through my investigation on Instagram, I discovered several accounts that suggested the video was done with the help of AI. Most of those accounts, however, were just personal ones belonging to ordinary users. Also, a few of the mentioned accounts did not have any content at all, or nothing related to the owner, and looked very much like bot accounts, which we learned about in our Module 4 lecture.

More people seemed to be in agreement with the statement about AI involvement while posting their thoughts on TikTok. Similar to Instagram, the same pattern continued; these were mostly personal or bots’ accounts, with no proof to back up the statements made. One account that particularly stood out was called @Adèl. It stated that the video was created with the help of AI. Then other users came to point out that the visuals used in the video were CGI. To which, @Adèl commented that they did not care much about the correct response.

Third: Stepping Back (SIFT Method)

At this stage, rather than simply confirming or denying the claim, I stopped. As I learned in module 4, this is an important step in the SIFT strategy. Just because the picture seems highly digital or surreal doesn’t necessarily mean that it was created through AI. Digital production comes in different shapes, and it is often difficult to distinguish between them if one does not have proper knowledge.

Fourth: Lateral Reading

Afterwards, I shifted my attention away from social media to try and get some information on my own. I searched using key phrases such as:

“Bad Angel Anyma Lisa video production”

“Is Bad Angel AI generated”

The thing is, I have learned that the video was not produced using AI but rather through CGI, VFX, and physical software. As stated in Behind the scenes article,

the video “used physical set design with CGI and VFX, not AI.”

This video belongs to an overall project developed by Anyma, combining the digital world and storytelling techniques. The details can be found in a recent Vogue interview

, where it is mentioned that the visuals belong to a “digital afterlife concept where human and artificial worlds merge.”

Fifth: Comparing the Claim to the Evidence

At this point, I compared both sides. The claim being made online was that the video is AI-generated, but there was no actual evidence supporting that. On the other hand, multiple sources explained that the visuals come from advanced CGI and VFX techniques. The video itself is meant to look futuristic and hyper-real, which can easily be mistaken for AI by viewers who aren’t familiar with digital production.

Final Conclusion

After going through this process, I came to the conclusion that the claim that the Bad Angel video is AI-generated is not supported by credible evidence. The claim seems to come more from assumption/emotional (hate) rather than fact, likely because the visuals are high in quality and somewhat unreal.

This process showed me how easy it is for misinformation to spread, especially when people don’t take the time to verify what they are seeing. It also reinforced the importance of slowing down and actually looking into a claim before believing it or repeating it. Taking a few extra steps, like checking sources and looking for evidence, can prevent the spread of false claims and give proper credit to the creatives behind the work. As we learned in class, using methods like lateral reading and SIFT is important, especially in digital spaces where information moves fast and not everything is accurate.

(Blog 4) Assessing current platforms’ attempts to curb misinformation

Misinformation is something I come across almost every time I scroll. Whether it’s on Instagram or TikTok, there is always some type of post that feels exaggerated or taken out of context. Most of the time, it looks believable enough that you don’t really question it, especially if you are just scrolling fast. Because of this, platforms have started putting systems in place to try to control what gets spread.

For this blog, I looked into how both platforms handle misinformation, and then compared that to what I actually notice when I use them.

Instagram’s Approach:

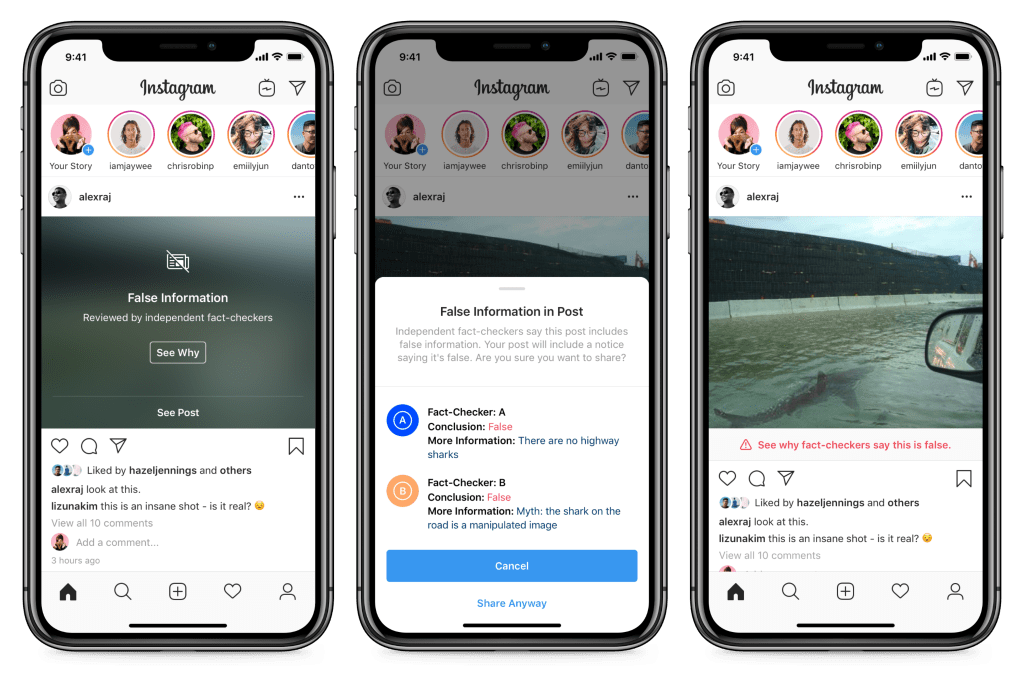

Instagram focuses on fact-checking and limiting reach. According to Meta, when something is rated as false by fact-checkers, they label it and reduce how many people see it, especially by keeping it off the Explore page

https://about.fb.com/news/2019/12/combatting-misinformation-on-instagram/

They also track accounts that repeatedly post misinformation. If someone keeps posting misleading content, their overall reach can go down, not just one post

https://transparency.meta.com/policies/community-standards/misinformation/

This is something I have actually seen while scrolling. There are posts that look normal at first, but then you see a “false information” label under it. Sometimes the image is blurred and you have to click through to see it. It kind of forces you to pause, but at the same time, I don’t always click “learn more.” Most people probably don’t.

Lately, Instagram has been doing something new when it comes to this issue. Meta revealed that they are switching from third-party fact-checkers to a user-powered model that involves users adding context

https://www.semafor.com/article/01/07/2025/meta-ends-fact-checking-program-on-facebook-and-instagram

These issues were flagged by experts early on. The Harvard study found that the elimination of structured fact-checking may lead to the increased spread of false information, particularly scientific and medical misinformation. https://hsph.harvard.edu/news/metas-fact-checking-changes-raise-concerns-about-spread-of-science-misinformation/

From my point of view, this explains why some posts feel like they slip through now. It feels less consistent compared to before.

TikTok’s Approach:

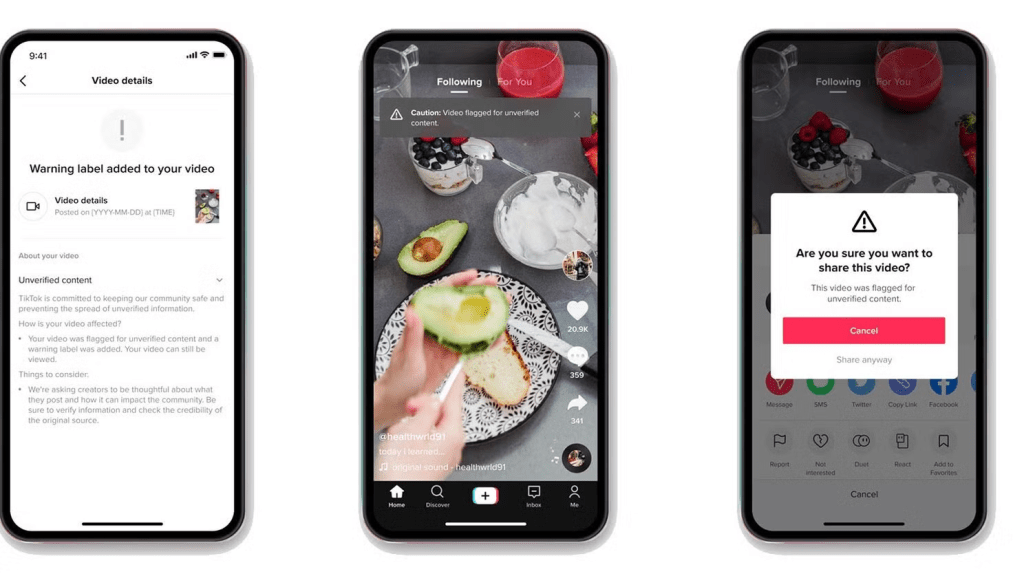

The way TikTok deals with misinformation is somewhat different. Instead of flagging all false information, TikTok tries to manage its spread. As mentioned in their Safety Center, while they delete any harmful misinformation, they also collaborate with fact checkers, and restrict the distribution of potentially misleading content.

https://www.tiktok.com/safety/en/misinformation/

This is because misinformation moves very quickly. A study from MIT showed that misinformation spreads faster and further than factual information.

https://www.science.org/doi/10.1126/science.aap9559

Therefore, instead of responding to issues after the fact, the TikTok app aims to slow things down by not promoting certain videos on the “For You” page.

In my opinion, the moderation on TikTok is less evident than that of Instagram. Although I am not seeing any warnings as I did on Instagram, I can observe that some clips simply cannot go further. On the other hand, there have been instances where I saw clips with very shocking content get many views instantly.

This reminds me of what I noticed in my media diary. The content that stands out the most is usually the most dramatic or simplified. That same type of content is what spreads the fastest.

Do These Policies Actually Work?

I feel that both platforms are making an effort; however, they are still not solving the problem completely.

One of the major problems is the speed at which misinformation is spread. It spreads much faster than fact-checking can be performed. This has been proven by research, showing that misinformation travels faster than corrective information.

https://www.science.org/doi/10.1126/science.aap9559

Another challenge is that of reactions to warnings issued. People do not necessarily trust such warnings because some may regard them as biased by the platform. They may end up ignoring all such warnings.

Another aspect is that of incomplete content. This means content that does not necessarily have to be false but incomplete. The Pew Research Center found out that there were many Americans who had trouble distinguishing between false and true information on social media.

This makes it harder for platforms to decide what to label or remove.

What Feels Missing:

One thing that stands out to me is context. Labels are helpful, but they don’t always explain enough. If someone is just scrolling, they might not even pay attention to the warning.

Another issue is the algorithm. Platforms push content that gets attention, and attention usually comes from bold or emotional posts. That type of content is also the easiest to misinterpret.

This connects directly to how I scroll. I usually notice the headline or the main image first. If it looks interesting, I might stop, but most of the time I just keep going. That makes it really easy for something misleading to stick without me fully thinking about it.

Suggestions for Improvement:

I believe another way to improve the situation would be to include more information within the actual post rather than placing it into a separate label. When the content explains itself, it becomes less likely to be overlooked.

Another solution is to slow down the process of sharing posts. According to research, even such minor measures as requiring the user to read before clicking share are efficient when it comes to combating misinformation

Finally, platforms should become more transparent in terms of moderating algorithms and processes behind them because at the moment they work in the background, which makes them invisible to users.

Final Thoughts:

It should be kept in mind that, despite the measures taken by Instagram and TikTok, misinformation remains a very relevant topic. Although platforms make their contribution and minimize it, they do not completely eliminate it.

From personal experience, I came to an important realization that I sometimes believe everything that appears on social media. Content in many cases can be brief and catchy, and it becomes hard to doubt anything.

To summarise, it should be said that although misinformation is associated with social platforms, this does not depend solely on them. The users’ interaction with content can significantly influence the presence of misinformation on the Internet.

(Blog 5) Planning your creation activity

Blog 5 assignment

Final Blog

Final Assigment blog